OpEds

Think you can beat the algorithm? Here’s how.

Most of us like to believe we are careful, discerning consumers of information. We read widely. We follow people we trust. We check sources. We tell ourselves we’re harder to fool than “other people”. We think we think for ourselves. But here’s the uncomfortable part: mostly, we don’t.

Not because we’re stupid or careless, but because the information environment we live in no longer works the way we assume it does.

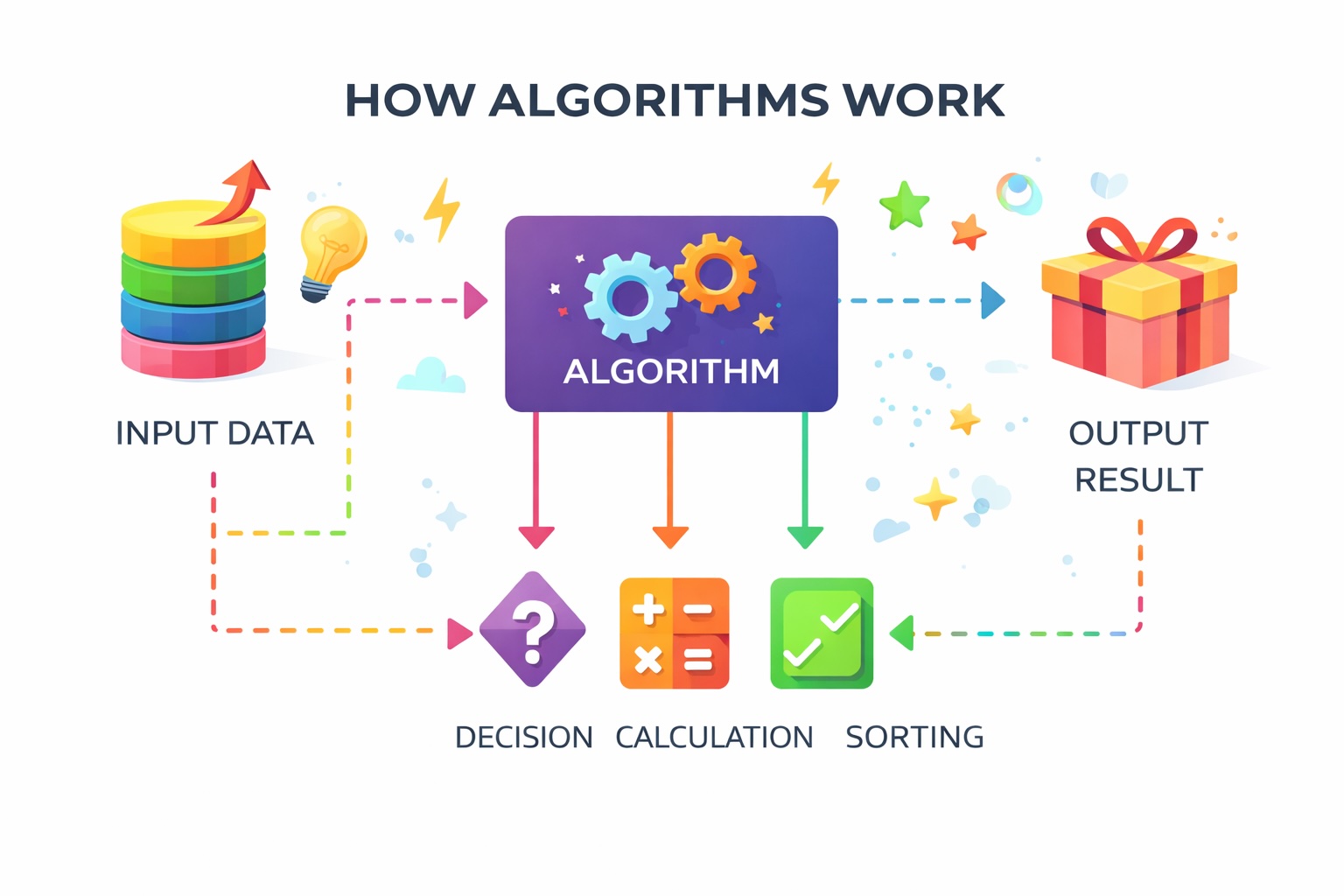

The algorithm doesn’t tell us what to think. It decides what we see, what we don’t, and how often we see it, until certain conclusions begin to feel like our own. That isn’t a conspiracy theory. It’s the business model.

Algorithms aren’t neutral librarians. They are optimisation machines. Their job is simple: to keep you engaged. And what keeps people engaged isn’t calm explanation or moral complexity. It’s emotion. Outrage. Fear. Vindication. The feeling of being absolutely right. If something makes you angry, you share it. If it makes you feel validated, you share it. If it confirms what you already believe, you linger. The algorithm notices – and gives you more of the same. Over time, this creates an illusion of choice: the sense that you’ve arrived at your worldview through independent thought, when in reality, your information diet has been heavily curated. You’re not seeing everything. You’re seeing what keeps you reacting.

This matters everywhere. But it matters especially when it comes to Israel and Hamas. Since 7 October 2023, many Jews have asked the same question in disbelief: how can people possibly see this so differently? How can Hamas be minimised, excused, or erased from the story? How can Israel be discussed only as a concept – power, occupation, aggression – without context, history, or human cost? The instinctive answer is often bad faith. Or blind hatred. Sometimes that’s true. But very often, something simpler – and more unsettling – is at work. People aren’t seeing the same inputs.

Someone scrolling through one feed sees footage of Israeli families shattered by terror, rockets fired from civilian areas, hostages dragged into Gaza. Someone else sees a steady stream of civilian suffering in Gaza, stripped of context, stripped of Hamas, framed emotionally and repeatedly until outrage feels inevitable. Both believe they are “well informed”. Both feel morally certain. Both feel the other side is unreachable. This is what happens when algorithms reward certainty and punishment of doubt. Nuance performs badly. Complexity travels slowly. Outrage spreads fast. The result isn’t just disagreement – it’s fragmentation. A breakdown in shared reality. Facts bounce off emotional certainty. Corrections feel like attacks. Explanation sounds like justification. Silence is interpreted as guilt. And crucially, none of us are immune.

The danger isn’t that people believe false things. It’s that we become deeply confident about what feels true to us without realising how narrow and emotionally engineered our information stream has become. For Jewish communities – including here in South Africa – this creates a double pressure. On the one side, there is a genuine sense of being besieged by hostile or distorted narratives. On the other, a temptation to retreat into informational safe spaces that feel comforting and affirming. Neither response is wrong. But neither restores control. Because simply knowing that algorithms exist doesn’t neutralise their power. Being aware that outrage spreads doesn’t stop it from working on us. Emotion still does what emotion does.

What does help is a shift in posture. Instead of asking only “Is this true,” a better first question is, “Why am I seeing this now?”

Instead of asking, “How can they not understand?” a more useful question is, “What information environment are they living in?”

This doesn’t resolve political disagreement. It doesn’t magically persuade people acting in bad faith. But it does something quieter and more important: it restores humility. And with humility comes resilience.

Resilience, in this context, doesn’t mean being tougher or louder. It means resisting constant emotional hijacking. It means slowing down before sharing. Reading past the headline. Not letting the most extreme content set the emotional temperature of your day. It means deliberately widening your inputs – even when that’s uncomfortable. And recognising when you’re being pushed to react rather than think. Because when algorithms decide what rises to the top of our feeds, they don’t just influence opinions. They shape emotional weather: what feels urgent, what feels threatening, what feels obvious. Once emotion is primed, reason often follows obediently behind.

The real challenge for communities that value education, debate, and moral clarity isn’t simply to “counter misinformation”. It’s to rebuild the habit of independent judgement in an environment designed to erode it. That takes discipline. It takes restraint. And it starts with acknowledging something difficult: none of us are as autonomous in our thinking as we would like to believe. In an age of engineered perception, discernment isn’t a personality trait. It’s a practice – one that has to be actively defended.

- Paula Slier is a veteran journalist and foreign correspondent who has reported from conflict zones across the Middle East, Africa and Europe. She writes on media, geopolitics and information warfare.

Clive

February 12, 2026 at 6:07 pm

Sharks are not fussy eaters. Russians turning into cannon fodder, Mandela’s turning into shark bait🤣🤣🤣🤣